Remote Firmware Upgrades

When there’s WiFi in the Amazon

Pretty much every sensor deployment we’ve done has been to remote areas with little or no connectivity. It can take days to reach some locations, either off roading through unforgiving terrain, boating in over crocodile infested waters, or hiking over rocks, ice, and snow. Sometimes we’ve been able to get status over satellite, but the bandwidth and power budget usually mean that the truly useful status and diagnostic information is left sitting idly on disk until the station can be visited again physically. It’s stressful setting up a station and then leaving the poor thing behind, hoping that nothing was forgotten and that enough testing was done.

Over the last few months our efforts have largely revolved around some work we’re doing with WCS and FIU in the Amazon jungle. Most of the stations there have been of the breed we’re used to, left on their own to fend for themselves. Lately we got word that a future site would have WiFi, which for us is a pretty unique opportunity for a few reasons. First, we’ll be able to get higher fidelity diagnostic information and data from these stations. In addition, given the right preparation, we’ll be able to service the firmware on these stations remotely.

Being able to remotely upgrade firmware is a feature I’ve been wanting for a while. Given the state of the FieldKit project we’ve never really had a reason to expend the effort for the feature, though. This recent news was a great opportunity to justify that initial groundwork work.

Now that the feature is implemented and being tested, I wanted to write up a post going over what the feature took. So, get ready, this is a software heavy post.

Overview

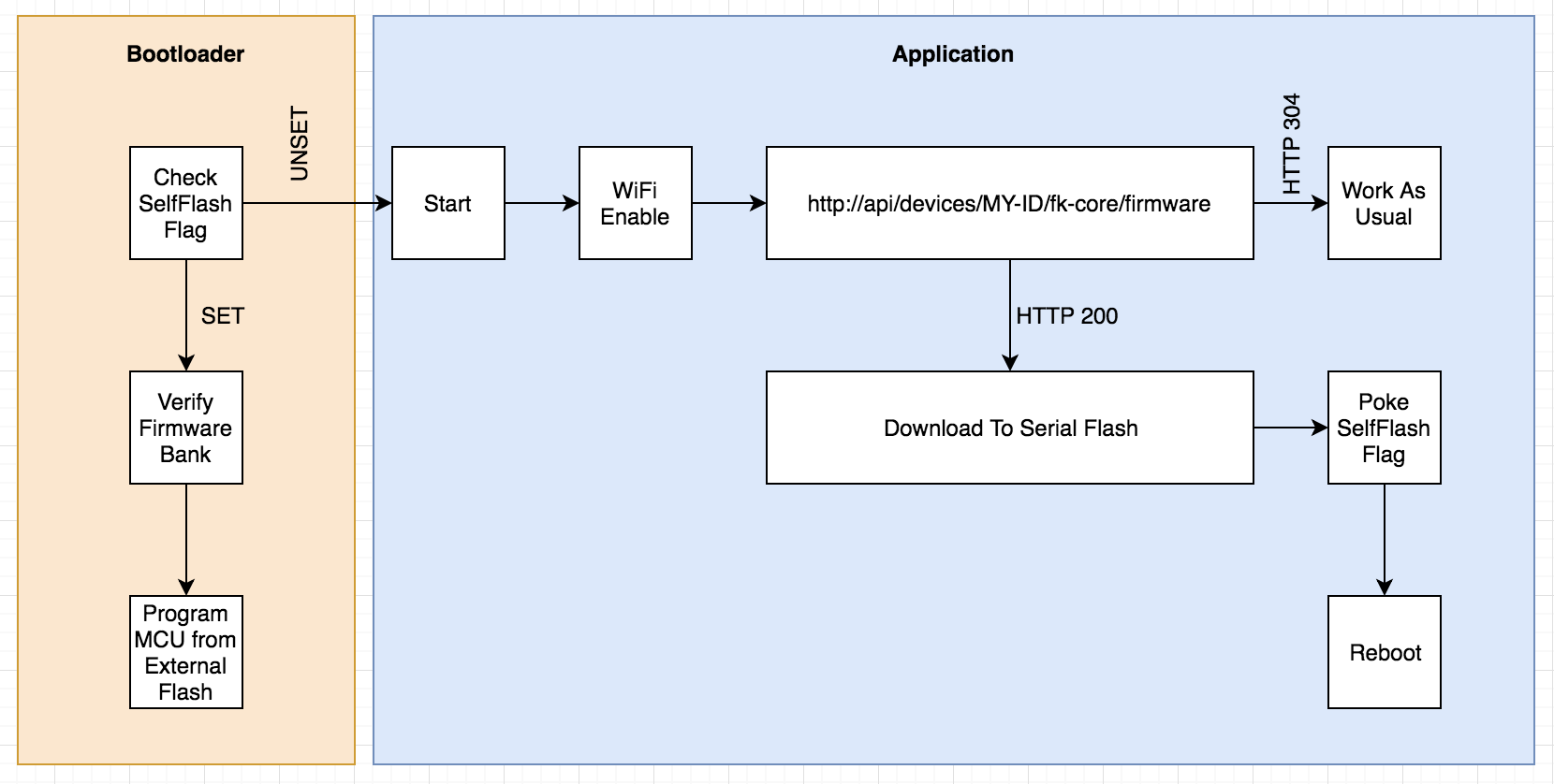

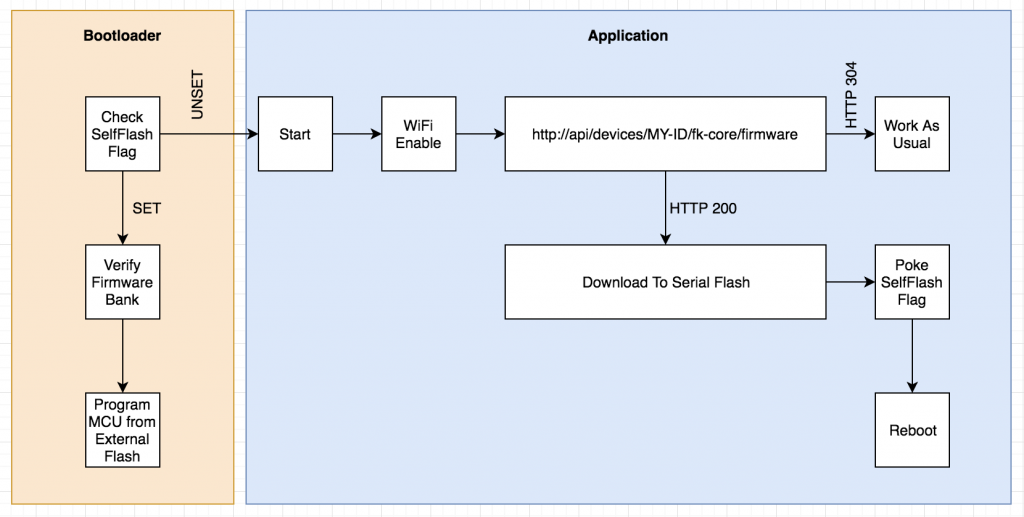

At a high level, the basic premise is that the station would periodically check with our servers to see if there is new firmware available. If there is, the firmware is downloaded and then stored in the Serial Flash chip. Once completed and verified, the MCU sets a flag in memory indicating the self-flash should be done and then restarts itself. At startup our custom bootloader checks for this flag, and if set will reprogram the MCU’s flash memory from the binary in the external flash chip.

When remotely upgrading module firmware the process is very similar. The Core module (the one with the WiFi) will check to see if any of the attached module’s firmware is outdated, downloading the binaries if necessary. Then that binary is transferred to the module over I2C, verified, and the module restarts itself in a similar fashion.

This is one area where us deciding to include serial flash memory as a standard “Module” feature was a good idea. This process would have been more awkward, otherwise.

It’s important to us that all of the work we do fit comfortably within the OSS/OSH ecosystem that’s evolved from Arduino and similar platforms. This work represents the largest deviation from that work, so far. Though it’s possible to use our code/hardware with standard bootloaders and simply forgo that functionality in your own projects.

Digging into Bootloaders

Most “maker” focused development boards in the Arduino ecosystem come pre-installed with a bootloader of some kind. This is a small program, usually less than 8k or so, then runs before application code and provides friendlier ways of programming the MCU. For example:

- Presenting the MCU as a USB storage device so you can simply copy new firmware files over.

- Checking for “double taps” of a physical button that places the MCU in a “ready to program” state.

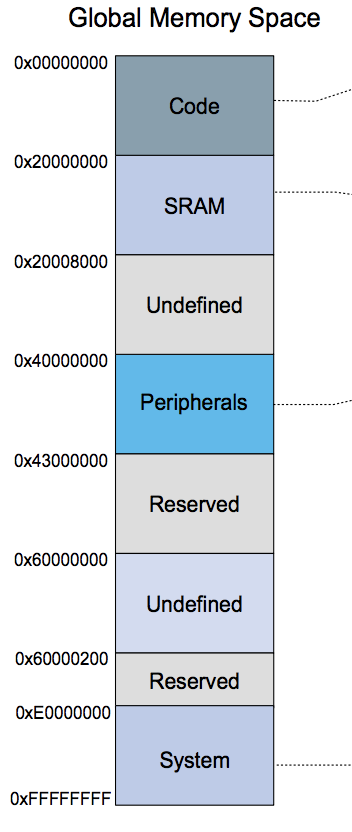

Now would be a good time to mention that all of our boards use the ATSAMD21G18 chip, the same one from the Arduino Zero boards and the Feather M0 line. So most of what’s here applies to them and another Cortex M* chips.

Our task in the bootloader is to check a pre-determined memory location for a magic value to indicate that the application firmware has left behind new firmware that should be flashed. This is similar to how the “double tap” checks are sometimes done and so we opted to use that same memory location with a different magic value than what’s used for the double tap.

This means that our custom bootloader had to learn a few new tricks. Specifically we needed:

- Access to one of the hardware UARTs for debugging purposes.

- Access to the SPI peripheral so we could talk to our external serial flash chip.

- File system code for accessing and reading the data in the serial flash chip (More on this later)

Note that most bootloaders are kept as small as possible so that more space is left over for application code. Once all the above functionality was implemented our bootloader had outgrown its original 8KB home, ballooning to around 22KB or so. On our chip we have 256KB of flash and our largest firmware weighs in around 150KB or so, leaving plenty of room for a larger bootloader. We settled on setting aside 32KB, for now.

File System

The proof of concept for this feature simply wrote the new firmware to a fixed location on the serial flash chip and the bootloader knew to start reading from there. From a wear leveling perspective this is probably fine given the infrequency of updates to firmware. Unfortunately, this basically dedicated 256KB of memory to these pending binaries. One thing we’d also started to investigate was storing a copy of the currently running firmware so that the device could decide to revert to a previous firmware if a problem was detected.

The serial flash memory was already being managed by our custom file system, Phylum and so we decided to add the ability to store variable sized files with that. Giving us a few other benefits that are best addressed in a post dedicated to the file system work. In the end, each board can store up to four binaries: Pending module and core updates and copies of firmware known to be good.

This was also nice because the code for manipulating these files works just how the code for manipulating files on our SD card does. While I’m mentioning the SD I should point out that because modules don’t have SD cards it made more sense for us to build this functionality around the serial flash.

Server Side Firmware Juggling

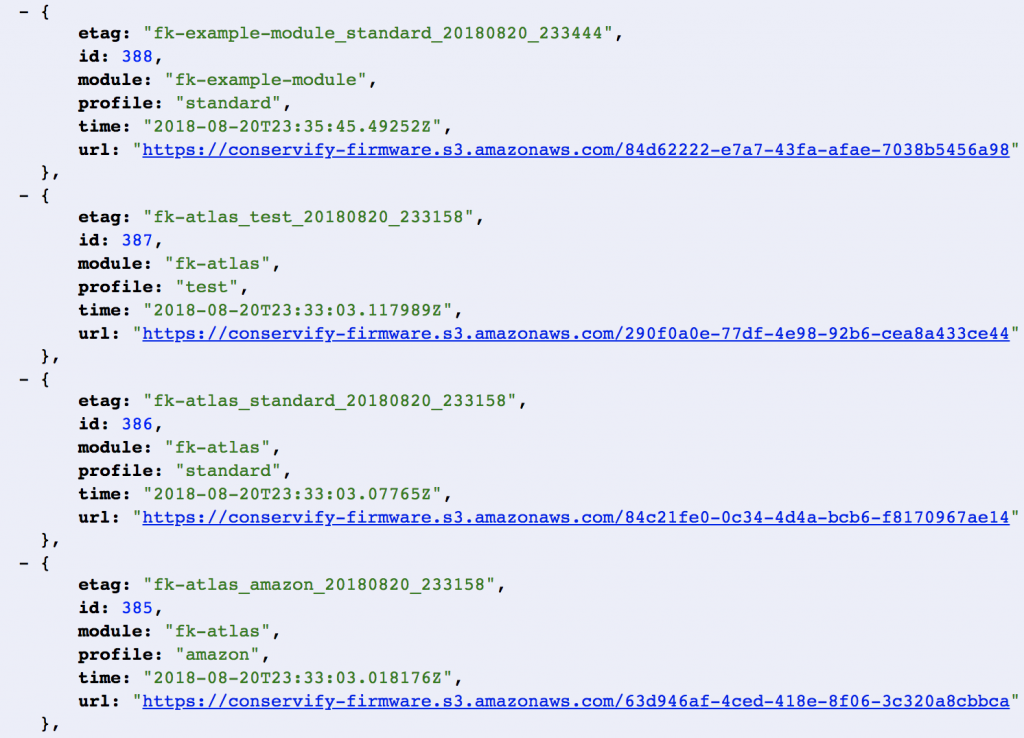

One of the easier parts of this feature was the server side code to handle juggling our firmware and distributing them to the modules as they “call in” There were a few things I wanted:

- Specifying firmware at a per device level. Each device has a unique device-identifier and our tools allow users to specify which device should be running which firmware. This way we can test and run different binaries across a set of devices.

- Per module, per device firmware. One thing to keep in mind is that a particular station actually involves more than one board. For example a typical station would have one Core board and one Sensor board. When devices call in to check firmware they do so on behalf of each connected module and the Core itself.

- Bandwidth friendliness. Firmware should only be downloaded when the firmware changes. Just because we have WiFi in the Amazon doesn’t mean we can abuse the bandwidth we’ve got.

This means the Core firmware knows its own firmware version and the version of all attached modules. It then issues a query to our servers of the form:

https://api.fieldkit.org/devices/{device-id}/{module}/firmware

One of the headers we provide is the If-None-Match header that includes an ETag, giving the server the ability to respond with a small 304 Not Modified response in the case that the device’s firmware is unchanged.

Build Server

I wanted to briefly mention that we rely heavily on our Jenkins server for managing our builds and some of our workflows. In fact, it’s through this server that new firmware gets fed to the server so they can be distributed to devices. We’re actually planning to write a dedicated post to the way we use Jenkins internally, but for now the basic idea is that after a successful build, the compiled and tested binaries are uploaded to S3 and the metadata for them is recorded in our database. We then have a tool that we can use to associate one of those firmwares with a device so that it’ll be downloaded and flashed on the next checkin.

Future Work

I briefly mentioned giving the devices the ability to revert themselves when they discover a problem with new firmware. This is still in our backlog, until we can decide what exactly that criteria should be. For now, though, our goal is to test this remote update functionality as much as possible.